Streamlining Structural Design for Civil Engineers

We explored how civil engineers worked to use various platforms to design and test buildings. By implementing an AI-assisted beam suggestion tool, we helped engineers reduce time spent on routine calculations while maintaining full control over critical design decisions.

Overview

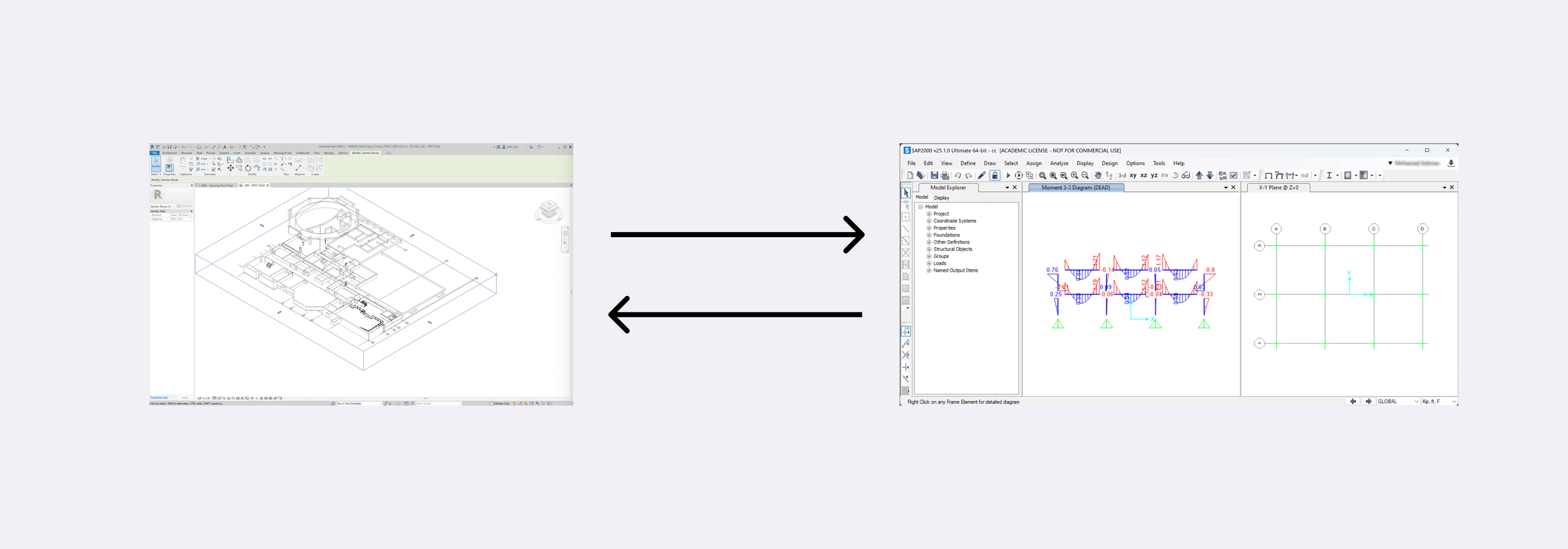

This project aims to improve the efficiency and tools civil engineers use, specifically structural engineers. They face significant challenges in designing safe and effective buildings that form our urban cities. Typically their role is to model buildings in different softwares to ensure their structural integrity. This process is very disjointed and iterative, requiring many steps and that engineers bounce between many softwares.

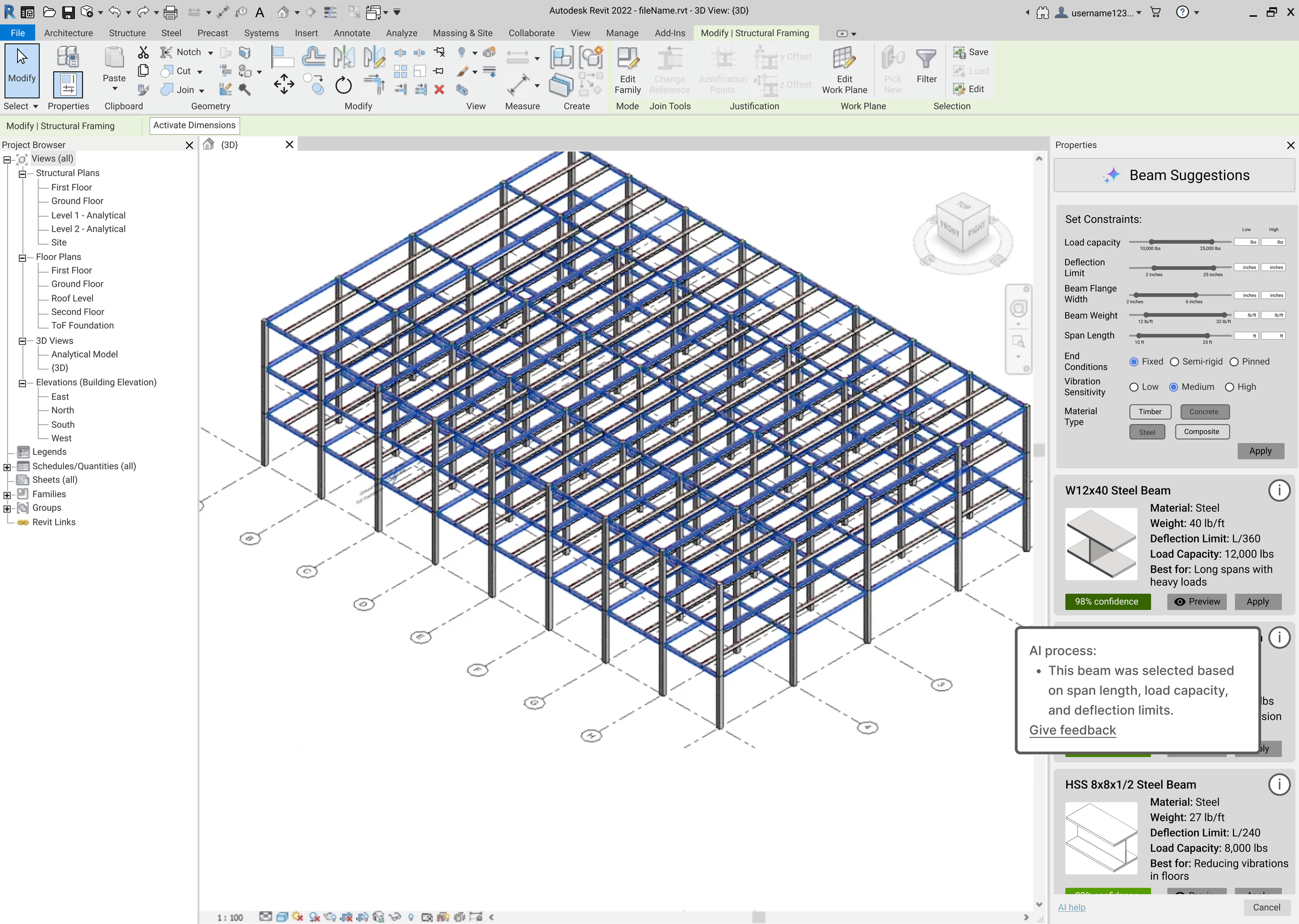

We worked to improve and speed up the engineering process in order to allow them to have more time in designing safe and modern buildings. We found that engineers, architects, and drafters all use Revit, a platform for modeling buildings. As such, we decided to add on to that platform in a way that would allow structural engineers to make quick changes directly on the models that they are testing. Based on in depth interviews with various civil engineers, we developed a tool that generates beam suggestions based on the structural elements of the model using AI. In addition, our tool could be used to help architects and drafters account for structural issues, so as to reduce the workload of structural engineers.

This was a class project for a class titled “Data Driven UX/Product Design” and was completed in 8 weeks. I was the team lead of a group of 4 students.

Discovery Phase

We decided to focus on civil engineers and their workflows. We looked at how they complete their daily tasks through contextual inquiry and interviews. This gave us insight into various issues civil engineers face like constantly switching between platforms, repetitive tasks, steep learning curves for new softwares, and strained collaboration.

Initial Research Questions

For our initial research, we wanted to gain an understanding of civil engineering as a whole and asses any issues engineers may experience. Specifically we had 5 main research questions:

What does a typical workflow look like for civil engineers?

What software platforms are currently being used and how do engineers interact with them?

How do engineers collaborate to draft up the designs of buildings?

What inefficiencies exist in civil engineers’ workflows?

When using many platforms, how do engineers transition between these different software tools?

Research Methods

We conducted interviews and contextual inquiry with civil engineers to have a better understanding of their workflows and how their tools can be improved. We interviewed three structural engineers (one project manager; two structural engineers) and conducted contextual inquiry with one design engineer.

Interviews (30-60 minutes):

In the interviews, we first probed about structural engineers' typical workflows, followed by having them show us some of the softwares they use. These were semi structured interviews that were done online through Zoom. Interviews were used to collect data in order to gain rich information about civil engineers. During the interviews, we had people navigate the platforms they were talking about and sharing their screen with us. This allowed us to look at how structural engineers may interact with these softwares.

Contextual Inquiry (> 60 minutes):

We conducted contextual inquiry with a design engineer at an engineering firm in San Diego. We inquired about similar topics as with the interviews and watched our stakeholder work. We decided to conduct contextual inquiry to get a more realistic view of our stakeholders workflows. This allowed us to also take note of how people interact with building design softwares and gain insight into any physical constraints that come with working in an engineering firm.

Key Insights

From our interviews and contextual inquiry, we found that collaboration on these projects got complicated, there were many tasks that could be automated, and that the various softwares are hard to balance and learn.

Building Background (RQ1 & RQ2)

💡 Key takeaway: We found that civil engineering is a very complex and iterative process that requires many steps.

To try to give context to the rest of our findings we outline what a projects general process looks like below:

The general structure is drawn up in software like Revit or Autocad; typically by an architect or drafter

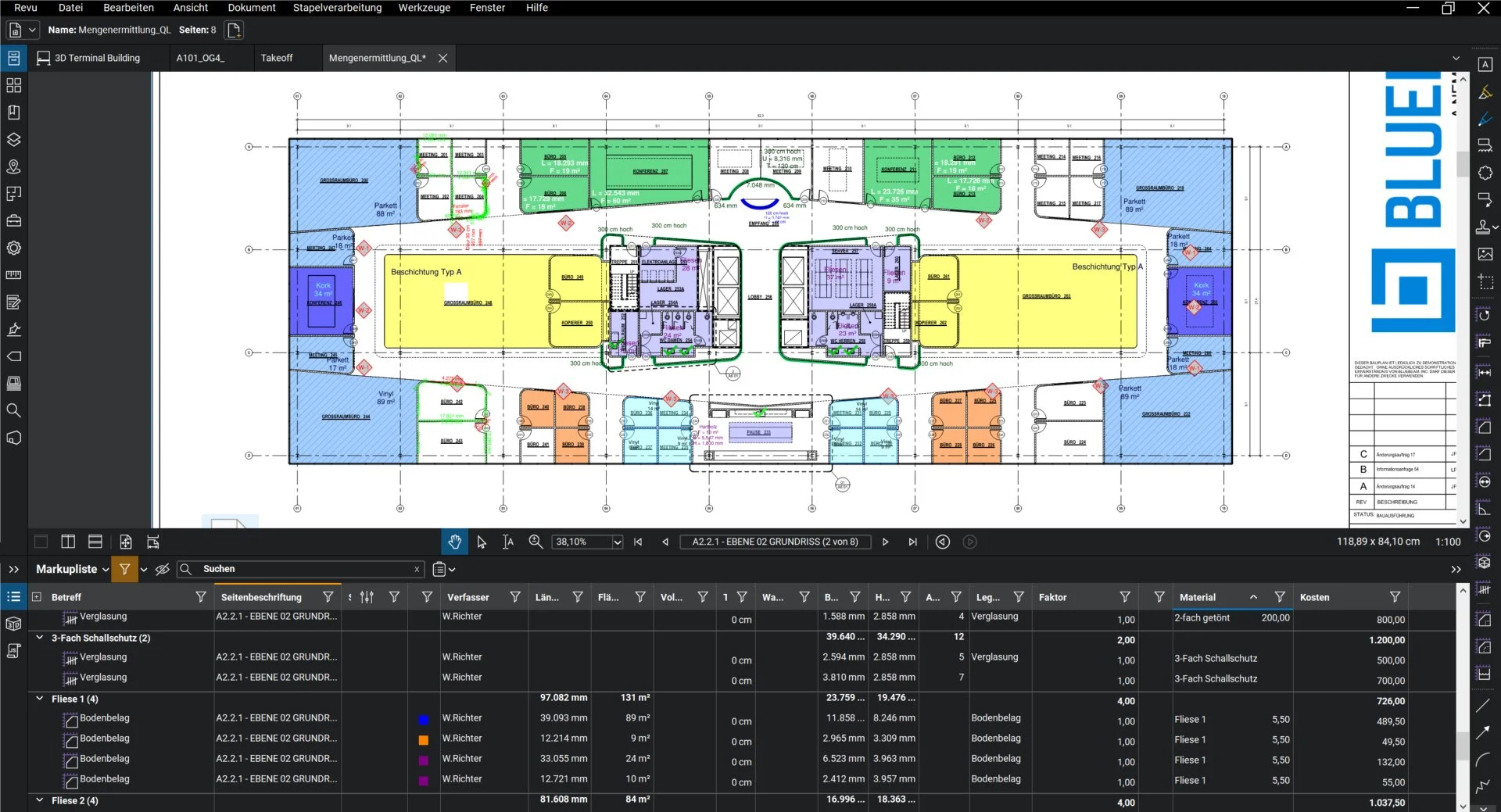

Design engineers, structural engineers, and project managers leave comments on a pdf file of the drawings on the software Bluebeam based on their engineering knowledge

Based on initial feedback, iteration occurs on the drawings

The building is then mocked up in a software to test the structural integrity of the design (there are various softwares used; most commonly SAP or eTabs)

Based on the forces on elements of the building, engineers leave comments in Bluebeam

This process happens iteratively until comments are resolved

Collaboration Through Comments (RQ3)

💡 Key takeaway: Engineers rely on Bluebeam for feedback, but its separation from design tools sometimes causes workflow inefficiencies.

Collaboration on projects typically occurred through a software called Bluebeam. In Bluebeam, a PDF editor specialized for architectural diagrams, one must first import the document to the software to add comments. Our first interviewee uses this software to give feedback on permit requests. Comments there take the form of redlines or clouds and can be overlaid on the aspect of the design that is being talked about. People can also respond to comments and have discussions on the platform. Due to the many steps required to take the design to Bluebeam, our participant said that sometimes he does not bother to add comments on the platform and instead just leaves a comment when responding to the permit request that, “in sheet number x along x there is x issue.” This then means that the comment is not integrated with the drawing, which is one of the main functionalities of Bluebeam.

Every other participant we interviewed similarly used Bluebeam to add comments. Some, like participant 3, mentioned that they liked that the comments were separated from the actual design files because that ensures that comments are only seen by people within the organization. He feared that comments would be accidentally exported when sending the documents to the city or clients which might cause issues.

“In Revit you typically want to keep it pretty clean, to just like final record stuff. So that’s why Bluebeam and pdf are nice because … no one's accidentally gonna print it with my notes saying ‘hey this is completely wrong.’” - P3

Essentially because Bluebeam is only used for comments, it provides a nice specialized area for notes. Other engineers that we interviewed also used comments on other softwares like Revit to ensure that they remember to make specific changes. Participant 4 mentioned that he will sometimes forget to implement comments that are left on Bluebeam because of the separation when working on other softwares like eTabs. Stakeholders 3 and 4 both also used Microsoft Teams for some collaboration. Specifically, P3, a project manager, used Teams to notify other engineers when they should make various changes to the structural designs.

Repetitive, Time Consuming Tasks (RQ4)

💡 Key takeaway: The design process involves many tasks that can be automated or made easier by using different types of interactions.

Throughout the interviews, it was evident that there is a lot of softwares that civil engineers need to bounce between to accomplish their job. This seems to be a potential pain point in their workflow. Regarding this issue, P1 mentioned,

“The design procedure is an iterative procedure. Maybe if there's an AI that’s going to enter, that will help me. But in general it is an iterative procedure.” -P1

In particular, he mentioned that he feels like the process of designing then testing the loads which takes place in two separate platforms could be aided by something like artificial intelligence. This hints that combining the two softwares or adding some features from each into the other could be helpful to his workflow. Another civil engineer that we interviewed mentioned that he feels like the most time consuming thing for him was choosing the type of beam to use when drafting up designs. When asked if he thought that process could be streamlined, he replied that he did think that something could be done to help. When probing this idea with our other civil engineers, they all also seemed to agree.

An unexpected finding is that spreadsheets are often used to model the forces on buildings or even draft up designs. People mentioned that it was sometimes just easier to use a spreadsheet. This is because spreadsheets were already set up to be templates that just require users to plug in values to model the forces. Additionally, it just took fewer steps to adjust structural elements in a cell as opposed to in other softwares. As these templates were specific to only some types of projects, they were only used for those purposes. This is an issue with current platforms, in that they must be set up from scratch to begin a project.

Variety of Softwares Leads to Issues (RQ5)

💡 Key takeaway: The various softwares used mean that engineers must bounce between many platforms and don’t have time to learn the intricacies of each software.

Considering the large number of softwares, engineers mentioned finding it difficult to actually learn all of the uses of each one.

“Autocad is very sophisticated so you would be hard pressed to find engineer who would have enough skill to develop their own drawings” - P2

Essentially, because all the softwares has so many features it was difficult for these engineers to actually be capable of learning them all. Another commonly mentioned aspect of this was the sentiment that they knew that something was possible using the software, but have never actually invested the time to try it. Specifically, participants 2, 3, and 4 all knew that some of the platforms could be linked together, but never tried to do so due to it being hidden in many menus.

The Problem

Based on our user research, we came up with a set of problems to focus on. By addressing this problem statement we hope to increase the efficiency and overall workflow for civil engineers.

Problem Statement

Civil engineers and architects working on large projects face a significant challenge in beam selection for buildings during the early design stages which can waste a lot of time and lead to many design iterations across many different platforms.

Primary Pain Points

It’s time consuming to determine the correct type of beam to use

Determining the correct beam choice is repetitive

Architects often make errors in beam choices

Engineers must bounce between many platforms to determine which beams to use

Beam choices take a lot of revisions

Ideating

Now that we have determined the problem we wanted to address, we did some secondary research to see if any products existed on the market that helped with this issue. We also created personas to help with this brainstorming process. Then, we fleshed out some ideas and created some UX flows.

We found that although current platforms offer many powerful tools for modeling and structural analysis, such as live file sharing, automatic code compliance, and having an integrated mixture of aspects of a project in one. They aren't as user-friendly, time efficient or seamless to use, which are the main needs our stakeholders want. There is a trend of complexity and manual processes that delay and create inefficiencies in the design stages for the beam selection.

Competitive Audit

We created a few personas to help inform our designs. Two of these personas can be found below.

User Personas

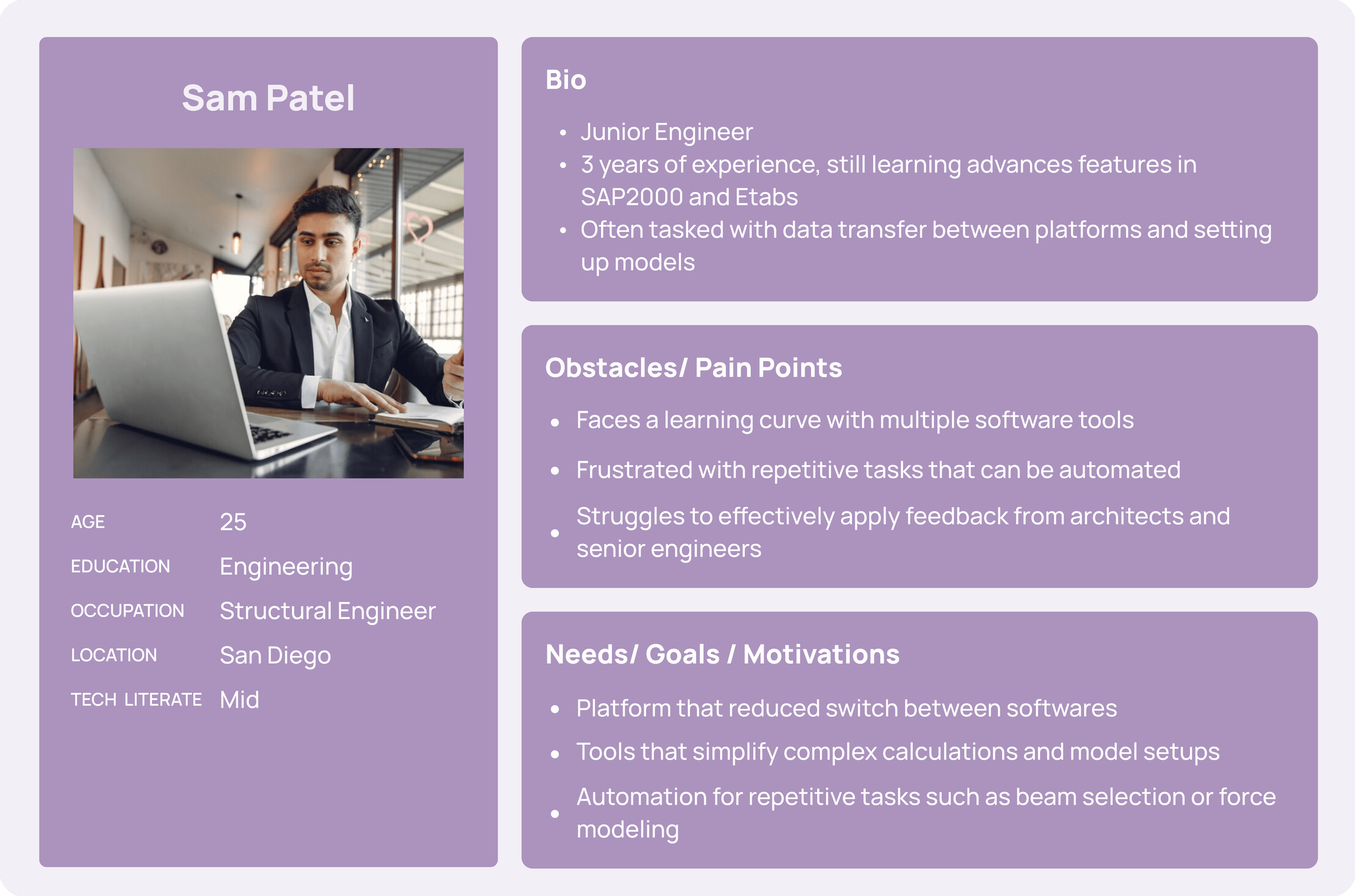

Our persona, Alex Carter, is a senior structural engineer who mostly manages other engineers. He represents a more senior engineer who often needs to communicate with architects and engineers. His persona highlights the need for integration between softwares and a reduction of complexities in the softwares.

Sam Patel represents stakeholders who work directly on calculations, modeling, and ensuring structural designs meet safety and feasibility standards. His persona highlights the need for automation of repetitive tasks, software integration to reduce switching between platforms, and simplified calculation tools to support engineers at various experience levels.

We came up with two different solutions to address the issue of selecting beams efficiently.

Initial Ideas

Our first idea is a targeted approach that uses AI to suggest beam types, sizes, and material based on structural analysis of the drawings. In this version, the user simply selects beams to use AI determine appropriate beam types. Users can then look at these recommendations and either accept or reject them. Finally, the beam choice by the user is integrated into the design and the user can provide feedback on their experience.

We propose that this flow would be integrated into Revit. This would allow drafters and architects to consider structural design principles to reduce work for structural engineers. Our persona, Jamie, would benefit from this as an architect because this would make beam selection simpler. At the moment there are many steps that go towards choosing beam types, so this solution would reduce the steps that go towards that. At the same time, this would reduce the workload of structural engineers like Sam and allow them to directly use the Revit model to conduct basic structural analysis. Below is a UX flow of what this would look like.

This second idea is more all encompassing. It uses AI to analyze the whole model. Based on the analysis it generates a report with recommendations for architects. In this way, it acts like a structural engineer. Then, the architect or drafter can implement these changes. In this flow, the difference is that it does this analysis after the beams are all initially modeled.

We would also want this tool to be integrated into Revit to allow for less application switching. Additionally, all comments left by this tool would be in Revit, thus meaning that it reduces back and forth between revit and annotating softwares like Bluebeam. This would likely be geared towards mostly structural engineers but be simple enough to be used by architects. This would work to reduce the number of design iterations as it can point out issues structural engineers may have pointed out after doing their own calculations. Architects could also benefit from this, as they could run this model to get feedback prior to even sending the designs to structural engineers. This would help Jamie in by reducing the back and forth between him and structural engineers. We also created a UX flow of this idea.

Design Process

Now that we have some ideas, we built them out and tested them. This helped us further refine our ideas.

Wireframes

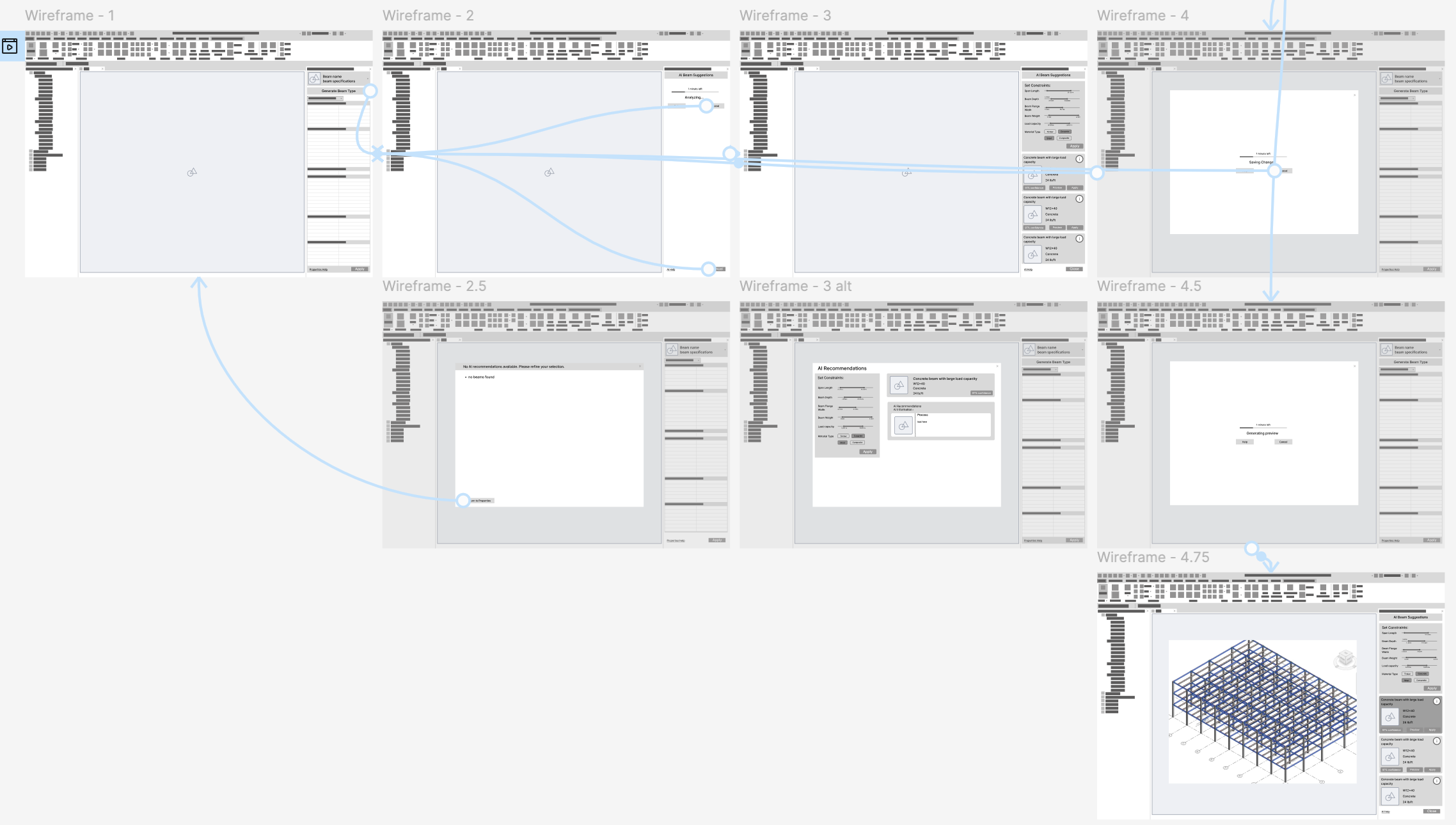

We instantiated both of our ideas as wireframes in order to have our stakeholders test them and give feedback.

Applying Beam Types: Idea 1

This first idea makes it so that people can click a button after selecting beams to get various beam recommendations.

Below is the basic flow of the wireframe:

Select beams

Click “Generate Beam Type” button

Panel changes to show that beams are being analyzed

Error screen if no beams are selected

Panel loads with beam recommendations

AI generated constraints that the user can change to regenerate suggestions

Various AI beam suggestion cards load

Users can apply correct beam type to selected beams

Analyzing All Structural Elements: Idea 2

In this flow, an AI acts like a structural engineer and leaves comments on the model.

Below is the basic flow of the wireframe:

Click “Generate Using AI” button

General AI recommendations are shown

Screen if there are no AI recommendations or error

Click next

AI comments are put over the model

Users can save comments to model

Usability Testing — Wireframes

We conducted usability testing to get our stakeholders feedback on each of our ideas. We had people click through each of our wireframes and answer some questions. We created an interview guide to help structure these interviews. We conducted 2 interviews that each lasted about 30 minutes.

Applying Beam Types: Idea 1

💡 Key takeaway: Both of our stakeholders preferred this idea but felt that the constraints could be refined and beam suggestion cards could be better formatted.

Positive feedback:

P1 liked that it reduced mental load by using data-driven decisions rather than relying on estimation

“This alone would be huge!” - P1

P1 said the idea allows for more accurate pricing of buildings earlier in the process

P2 liked the popup of the AI process

Criticism:

P2 was nervous about if AI could produce accurate results

P1 suggested highlighting the beam options by what they would be “best for” (ex: “best for vibrations”)

P1 mentioned that we could remove some of the constraints to make the project more narrowly scoped

Both participants mentioned that some of the constraints we have rely on the other, thus making them repetitive

P2 suggested adding constraints for designers like beam connection types

P2 said that the AI would have to account for when selected beams should have different types

P1 mentioned that text entry instead of sliders would help streamline the process

Analyzing All Structural Elements: Idea 2

💡 Key takeaway: Our stakeholders liked the idea but felt that it could be more narrowly scoped as it currently is too complex for architects and drafters and could reduce managerial control over the project.

Positive feedback:

P2 felt that it had a large potential for being useful to structural engineers

P1 mentioned that he believes Revit would want to integrate this feature into their product

Criticism:

P1 was worried that the tool might reduce his control as a project manager over changes to the revit model

P2 was concerned that this prototype might have feedback that is too complex for architects and drafters

P1 felt that this might be too complex to integrate directly into Revit

P2 mentioned that if the AI had a lot of comments they might overlap and be difficult to manage

P2 suggested adding a comment filter to the AI comments

P1 felt like we could narrow down the scope of the product

Based on the usability testing, we decided to move forward with our first idea: AI beam suggestions. We created a high fidelity prototype and to further instantiate our idea.

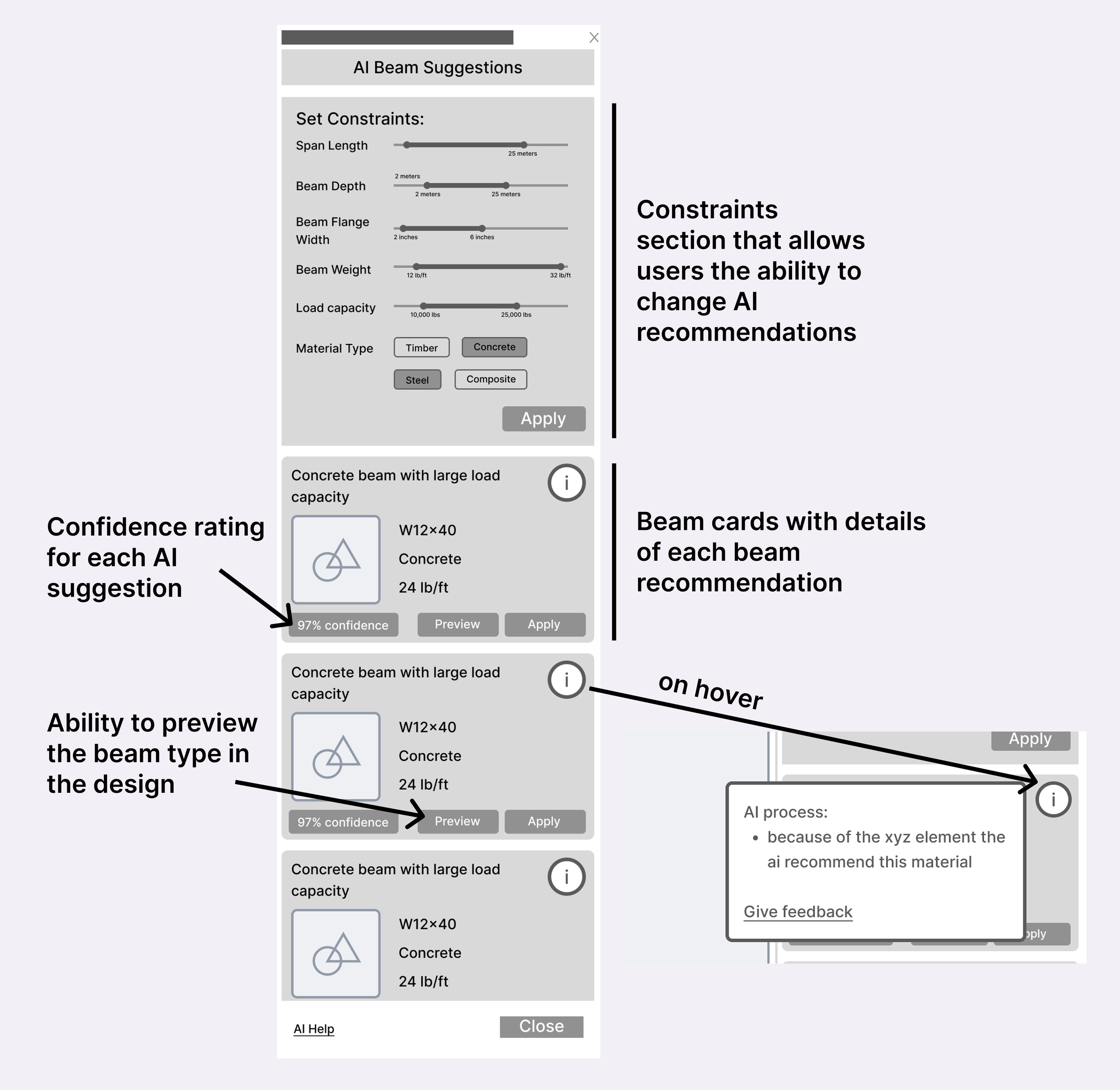

Initial High Fidelity Prototype

Changes made based on usability testing:

Changed the sliders to allow people to use text entry as well

Changed some of the units we used for the constraints

Highlighted the beam options by what they would be “best for”

Reworked our constraints by making sure they are not repetitive

Testing and Iterating

To further ensure that our designs work for our stakeholders, we conducted usability testing again. This time we created some alternate versions of the screens that we had our stakeholders compare and comment on.

Usability Testing — HiFi

For this usability testing round, we also recruited 2 stakeholders to test our prototype. Each of these sessions also lasted about 30 minutes.

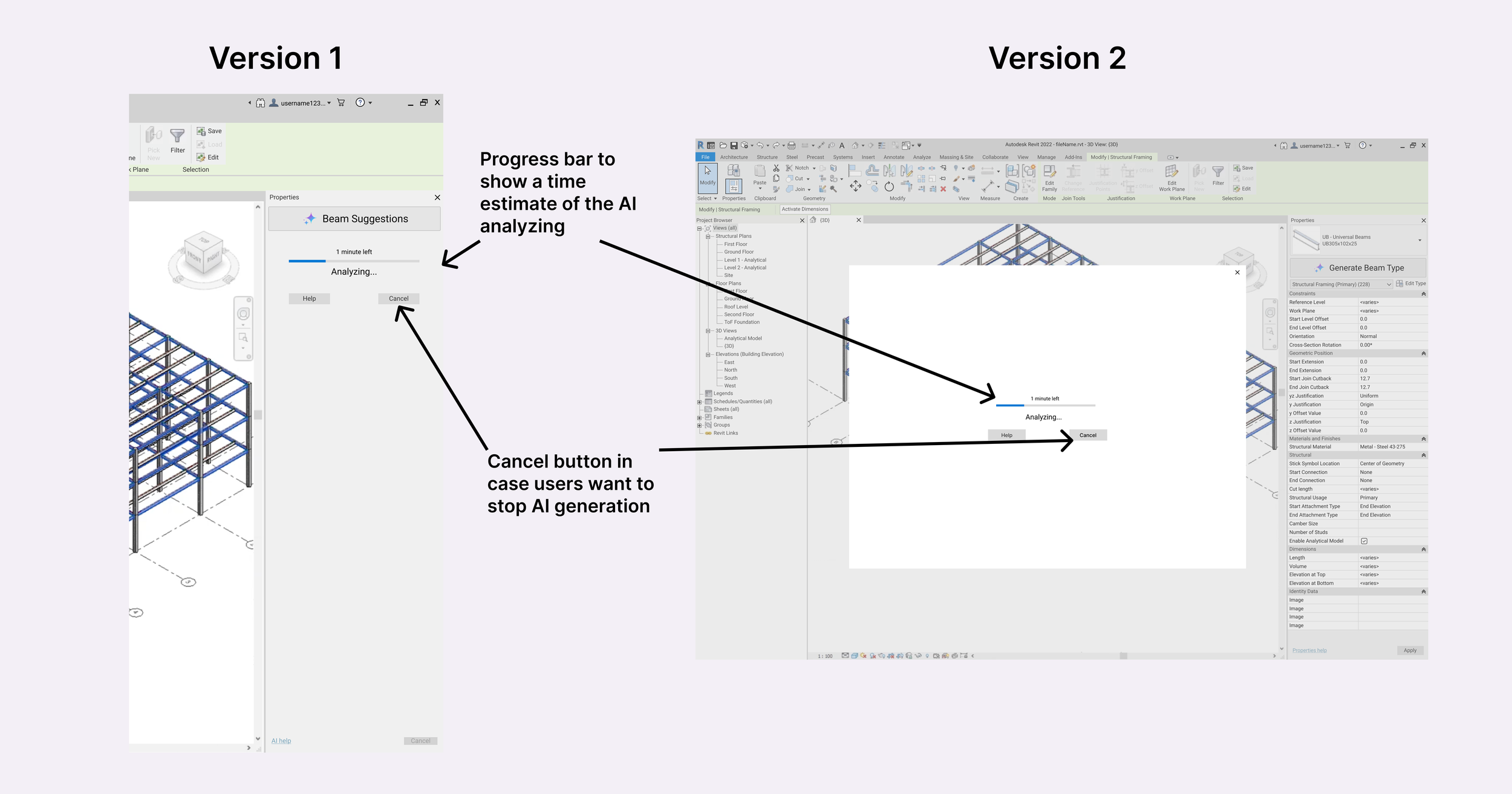

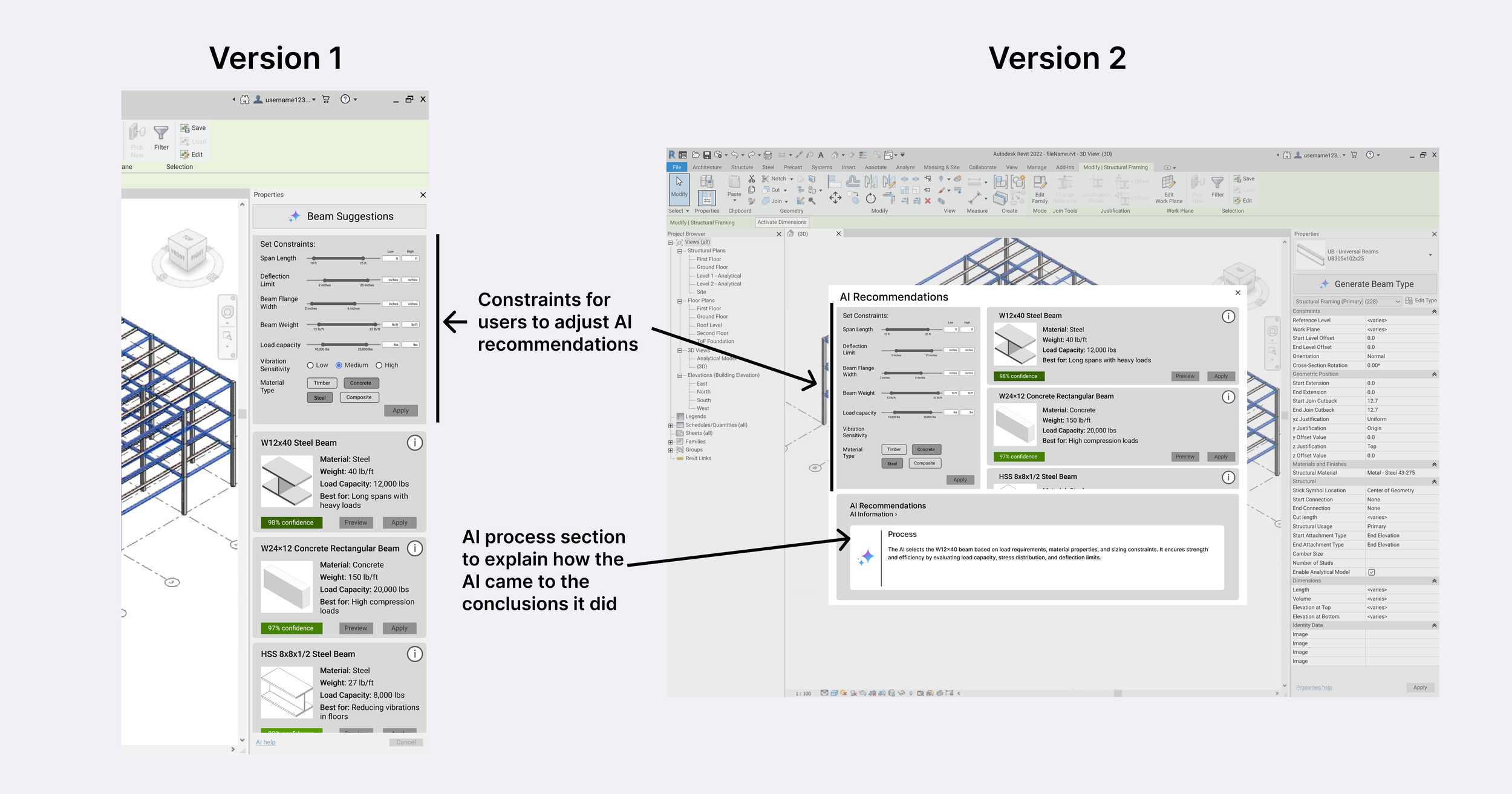

We decided to created multiple versions of our screens to have our stakeholders compare. The main difference among all of these is that one version (version 1) has all the UI elements placed in a side panel, while version 2 has all information in a pop up window.

Usability Testing Insights

💡 Key takeaway: Our stakeholders prefered version 1 and expressed that we could further refine our AI constraints, beam cards, and preview feature.

Comparison of Screen Versions:

Both P1 and P2 prefered having information in a sidebar rather than a pop up window

Both stakeholders liked the AI process section in version 2

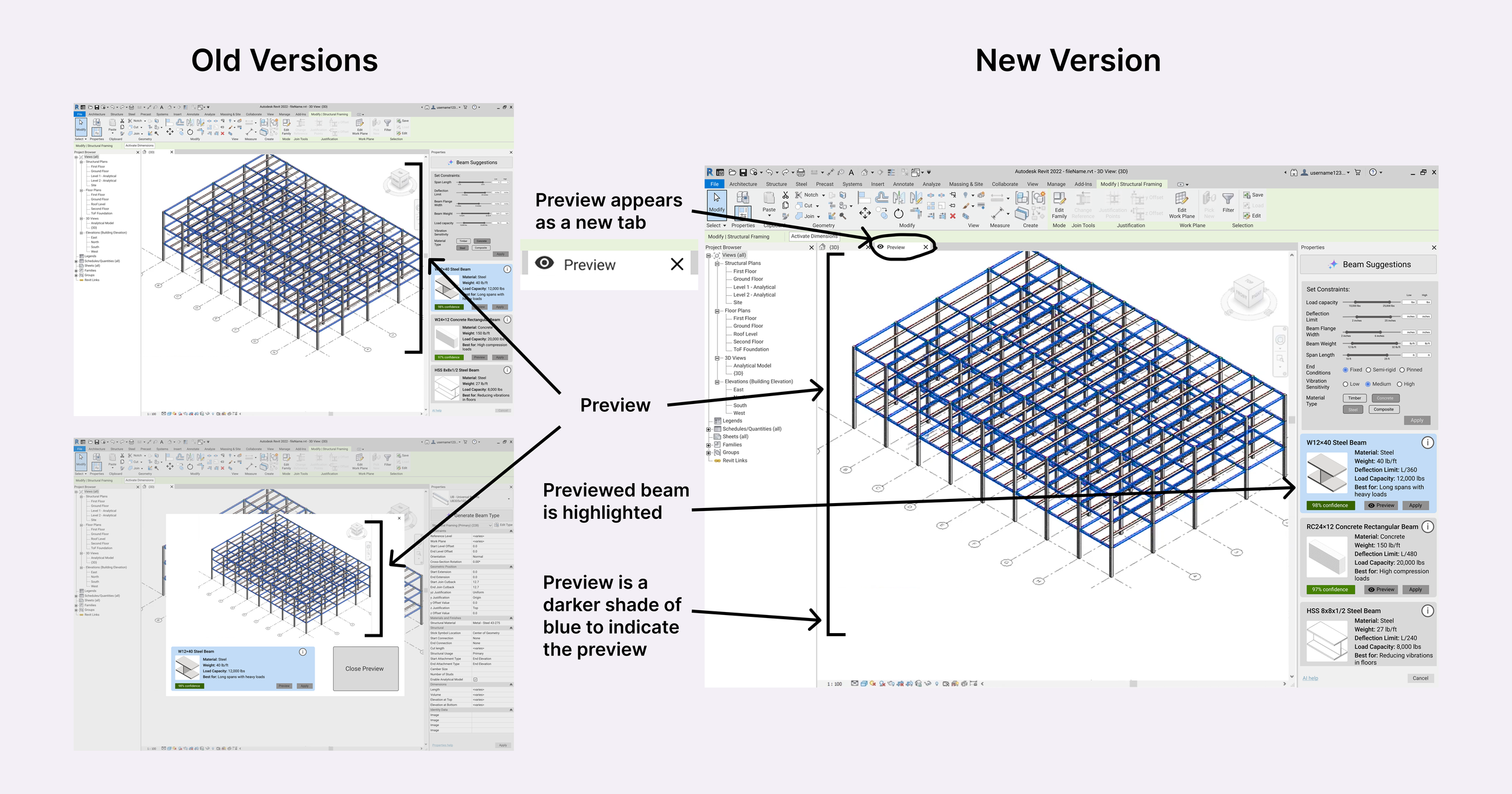

P1 and P2 wanted some sort of pop up for the preview because in both versions they were not super clear as they either lacked signifiers of the preview (sidebar version) or took up too much screen real estate (popup version)

Positive Feedback:

P1 was very excited by this idea saying, ““if you do this AI thing in Revit it will help me!”

P2 appreciated the added constraints

Criticism:

Both P1 and P2 tried to click on the confidence rating

P1 and P2 struggled to read buttons and want them to be more contrast and be more consistent

P2 mentioned that the error screen should still be in the side bar and formatted better

P1 suggested adding deflection limit to beam cards

P2 wanted us to add more designer controls as constraints

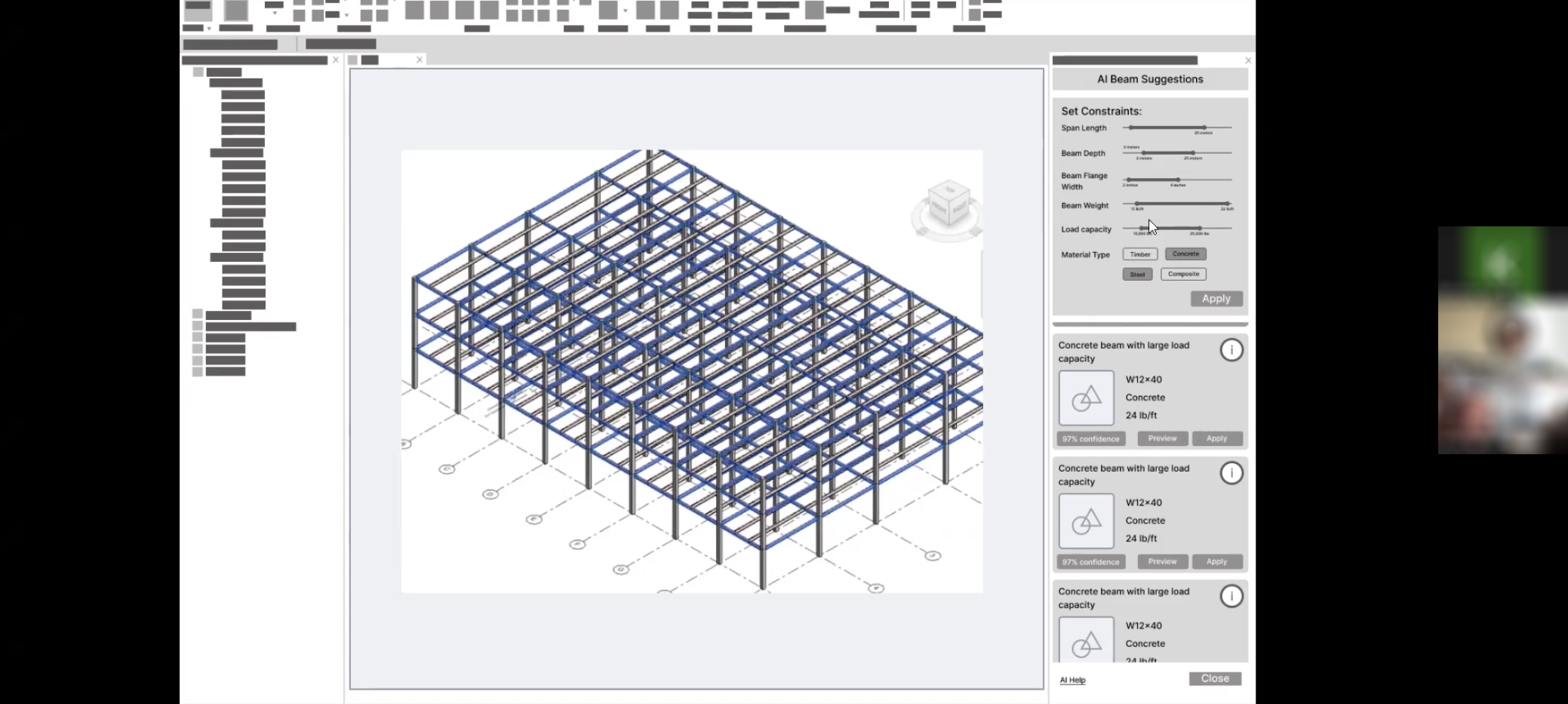

Final Prototype

After getting feedback on our design, we further refined our prototype to be more streamlined.

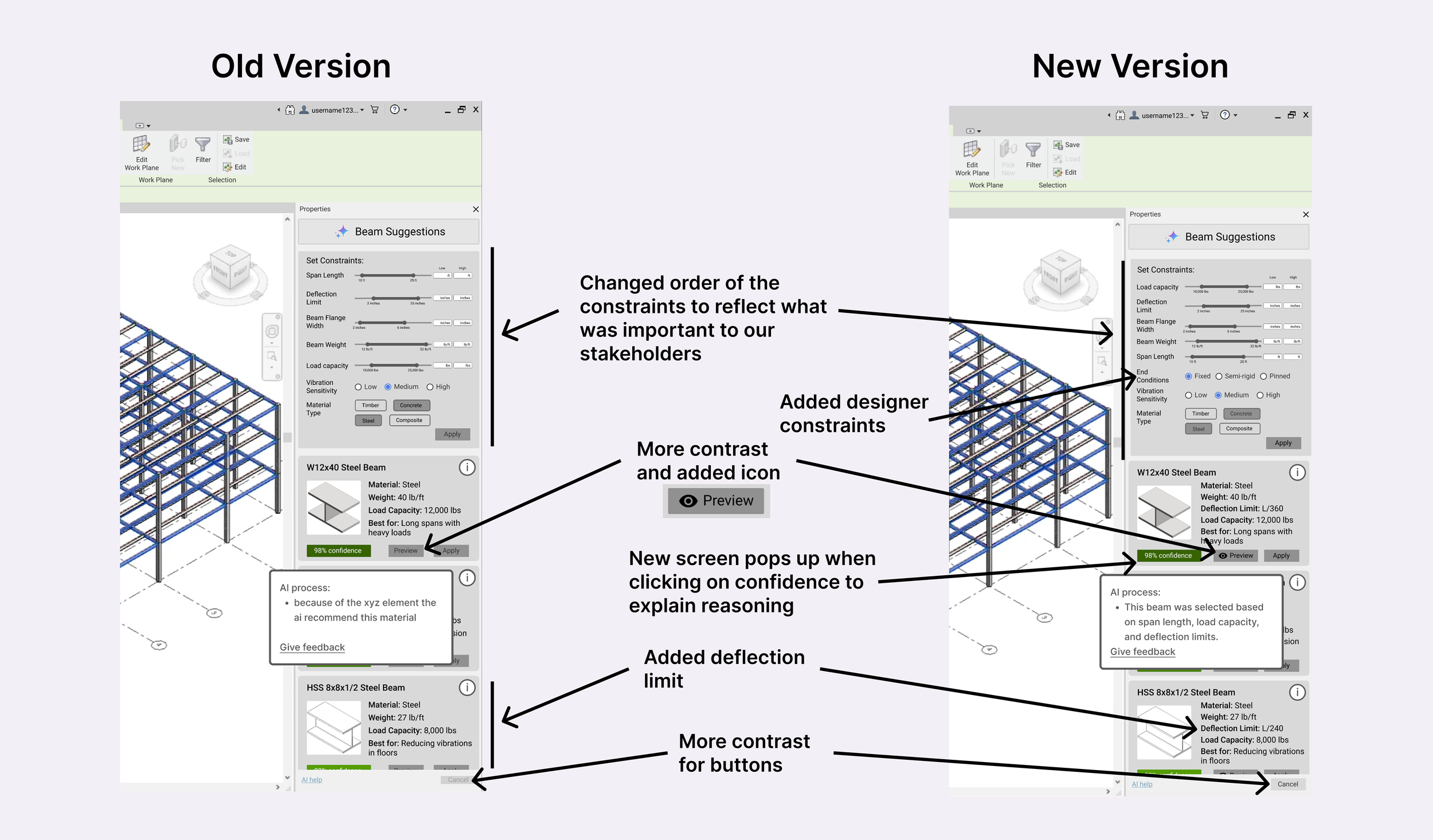

Changes made based on usability testing:

Buttons have more contrast and are more consistent

New AI process screen that comes up when you click on the confidence

Error screen (2.5) moved to the side bar and formatted better

Preview is displayed as new tab instead of pop up or just changing main screen

Added deflection limit to beam cards

Added end conditions for beams (fixed condition, pinned) as a constraint

Deprioritized span length (moved to the bottom)

Changed concrete beam from W to RC – recommendation of stakeholder

Moved load capacity to the top

Added icon to preview button

Before And After Story: Preview Screen

Previously, we had the preview button either overlay the preview on the main design or appear as a popup. But, after user testing, we found that our users were having a difficult time recognizing that the preview appeared in the side panel view or, with the pop up screen, users did not like how it covered the rest of the UI. Therefore, we decided to redesign and refine our screen options to clearly highlight the AI recommendation selection, and have the selection show up in a new tab in a darker blue in order for the user to clearly see the recommendations on their sketch layout.

Before And After Story: Side Bar

During our user testing, one of our users specifically brought up that they feel that ‘Load Capacity’ is one of the most important constraint so we rearranged our constraints to reflect this. Another participant pointed out that ‘Deflection Limit’ would be helpful to add to the beam recommendations cards. Additionally, we noticed our stakeholders had a hard time finding the preview button, so we added an eye icon next to the preview button so that it could stand out more. Additionally, we generally made our components more consistent.

Outcome

By creating this new feature, we hope to streamline the process of specifying beam types in Revit. Our users listed a number of potential benefits from this idea.

Reducing repetitive tasks

Allowing for more accurate estimates of project costs earlier in the design process

Reducing the number of iterations in the design process

Creating a less time consuming process for beam selection

Lessening the need for using multiple platforms to do structural analysis

Reflection

Reaching Out to Stakeholders Early & Often

Finding stakeholders and getting responses was a challenge throughout this project. I reached out to over 25 civil engineering firms or civil engineers to try to get a response. As getting a response was difficult, I resorted to different ways to recruit participants like phone calls, emails, and LinkedIn. Considering our time constraints, we also had to ensure that we reached out to stakeholders often to be able to do our usability testing.

Balancing AI and User Trust

We found that users did not appreciate AI having control over their workflows. People seem to appreciate some user control over their tasks. Users want to feel like they are at the driver’s seat and that they know what is going into various decisions. Essentially, AI should give reasoning for why it makes the choices it does to give users a sense of understanding.

The Value of Testing with a Diverse User Base

We decided to interview and do usability testing with a variety of stakeholders with different roles to try to get different perspectives. This ended up giving us many different angles to our idea and helped shape the final result a lot. For example, we talked to a project manager who gave us a lot of insights into what clients and engineering firms would want to consider. At the same time, we also got the insights of people who would actually use our platform to look at what they would want prioritized.